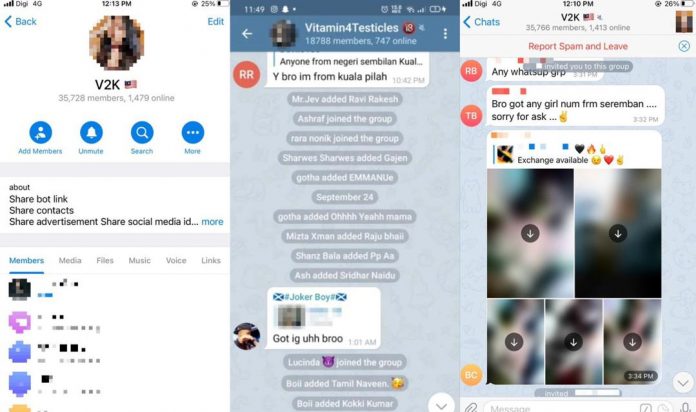

New ones are added every day, allowing you to keep up with and play with the current hot trends, become a short video expert and lead the trend in the circle of friends.Ĭome and experience the journey of your life, complete the switch of outfits with a smile and a gesture, experience the changing of seasons and the passage of time in one video. And with an app like DeepNude on the scene, the harassment would have been even easier to execute.FacePlay is an app that covers many AI special effects gameplay, including AI silky dress-up, AI photo-taking, video face-changing, AI comics, video comics, etc.įacePlay takes you through thousands of styles and ever-changing lives. Some of the women have even tried to get these videos unsuccessfully removed from pornographic websites where they are hosted, but that has not happened. One of the biggest problems with deep fake AI is of course the potential for misuse and harassing women.Ī recent article on HuffPost US revealed that ordinary women are already being harassed thanks to deep fake technology, which is deployed to create fake pornography videos of them. Recently a deep fake video of Mark Zuckerberg went viral to showcase exactly why this is a serious problem. The world is not yet ready for DeepNude.’ The world should ideally never be ready for an app like DeepNude considering that it represents is a violation of privacy and objectifies women.ĭeep fakes pose a serious problem to how content on the internet will be perceived and could only aggravate the spread of misinformation. The letter ends saying ‘People who have not yet upgraded will received a refund. However, they acknowledge that other developers could sell copies of DeepNude on the web, but insisted they would not be selling the app. The developers have also said they don’t want to make money this way, adding that they will not be releasing the license to anyone else to use the app and will not be activating the premium versions either. Deep fakes can accurately copy the mannerisms, sound of the person they are supposed to be imitating. Deep fakes are not the crude photoshop fake images or badly edited videos that are easy to spot. These can fake someone’s voice, face, even body language and add them to a video or an image. Be it 100 people using the app or half a million, the idea proposed by DeepNudes is deeply problematic.Ĭoming to the Deep Fake technology, which was used to achieve this, it relies on more sophisticated tools afforded by artificial intelligence and machine learning. It raises the question on why the developers think it is a problem only if too many people use the app. Should women be relieved that only a few of their photos can be converted into nudes? We’re not sure.įurther, the letter goes on to acknowledge that if too many people were to use it (around 500,000 is mentioned which is not small at all), there is a probability that it will be misused. They’ve also said app is not that great and can only work accurately in some situations. In their takedown notice, the developers further defend themselves saying that they were only planning to do sales in a controlled manner.

Further, the app’s Twitter account has this tagline, ‘The superpower you always wanted.’ Clearly creating non-consensual nudes of women is considered a superpower.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed